WhaleGPT

AI can work wonders with human language. Can it also help us talk to whales?

AI can work wonders with human language. Can it also help us talk to whales?

You learnt to imitate the people around you when you were three months old. At twelve months, you managed to attach words to meanings. As you grew older, you become increasingly conversant in language, being able to gossip and exchange information to your heart’s content.

Humans are intrinsically social beings. For us, communication is key, be it with other humans, animals, or even extraterrestrials! The question of whether we are the only species with big ideas out there has been around for years.

The Search for Extraterrestrial Intelligence or SETI project sets out to search for intelligence in outer space. Beginning as a small project within NASA, today, the organization is one of the only organizations in America looking for signs of life in the universe.

But what about life on Earth? Do any non-human species on Earth brim with ideas that we only fail to identify?

To answer that, we must first ask: what sets human communication apart from animal communication?

The American linguist and anthropologist, Charles Hockett has an answer. He identifies several ‘design features’ of human languages, which makes our communication unique. We associate human sounds with meanings. We talk of things that do not exist, of the past and the future. We create an infinite number of sentences. We can lie. We can deceive. We use language to talk about, well, language!

To what extent can animals do this, though?

Features animals and humans share in common, according to Hockett, are things such as our communication being mostly audio based (speaking and hearing), being able to identify which direction sound is reaching us from, and that our speech is temporary. Sound waves disappear once someone stops speaking.

However, humans have additional qualities that sets us apart from animals — we have rigid structures to our languages, and our actions are generally made to convey a meaning. They do not serve any primary biological function. In contrast to this, a dog panting to cool down is a biological function first, and a message second. Animals also cannot use language to describe other languages.

That’s not to say that animals do not have well established communication systems. Studies show that bats make sounds and argue over food, distinguish between genders and call each other by names. Parrots, like Alex — a famous subject of a thirty year experiment — and bonobos, like Kanzi, can learn and associate words with objects. Dolphins can understand differences in word order (who jumps over whom, for example). Bees communicate through body movements and sounds.

We do not, however, fully understand the extent to which animals communicate or the entire content of their language. Some progress is on the way. Bee researchers are working to understand bee communication and to create a robotic bee called RoboBee to trick bees into thinking that the robot is a real bee. This robot hasn’t been very successful so far.

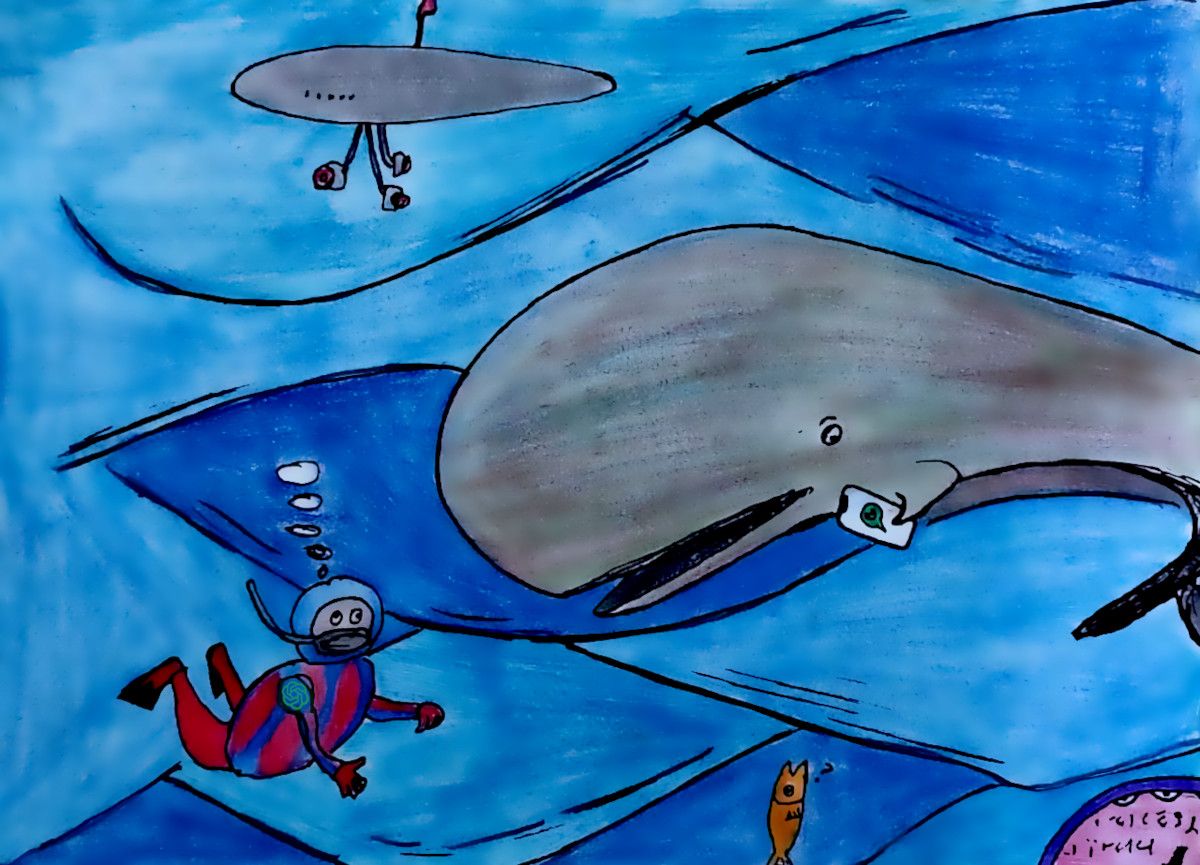

A project called CETI — Cetacean Translation Initiative — was initiated to help scientists study and understand the language of sperm whales.

You may ask, why whales?

Whales belong to the animal family of Cetaceans (which also includes dolphins). Sperm whales have larger brains than humans. In fact, sperm whale brains are larger than all animals on Earth! Humans and sperm whale brains both have ‘spindle neurons’, which enable our reasoning, memory and communication skills. Whales are also emotionally intelligent. They too feel grief and empathy.

Whales have complex communication systems. They are social animals like us and live in groups, which are lead by female whales. They also bring up the young whales for a prolonged period like humans. Different groups of whales show different behaviours and have language dialects — much like humans and different cultures. They can make sounds, vary them and copy each other. Furthermore, because they live in water, they rely less on smell and sight and more on sound.

Communicating in mountainous regions is not easy, which is why humans have come up with a solution that broadcasts language effectively: whistling. The mountain shepherds of northern Turkey communicate through whistles that can carry dozens of times farther than ordinary speech.

Instead of whistles, whales talk to each other using short bursts of clicks called codas. Lasting two seconds long, these bursts of 2 to 40 clicks are used to communicate while catching prey and for moving around. Codas can be specific to a group, and each group of whales has about twenty different codas.

Using these codas, whales can talk to each other over distances of metres to kilometres. Not surprisingly, they produce more codas when they socialise.

Researchers have already been collecting recordings of these codas, but we don’t know what they mean or how they work. Do they have a grammar, and can they be combined to produce whale sentences?

One usually doesn’t think of other animals as using grammar. We think of simple meanings, such as a growl indicating aggression or a yelp of pain, but nothing as nuanced as the Shakespearean “They have been at a great feast of languages, and stolen the scraps”. But is there a reason to think that?

Whistled Turkish isn’t quite a new language, but more like a way to encode the vowels and consonants into whistled notes. That means it certainly has a grammar — because it’s the same grammar as spoken Turkish itself. And it’s not just Turkish: almost any language can be converted to a whistled language — and it has been, in numerous densely vegetated or ruggedly terrained regions around the world.

Sign languages, in their silence, have their own grammar too; a grammar which is encoded not in words and punctuation but in signs and gestures. That’s right: it’s not just pointing or miming; this is a context where the way you move your wrist may be considered incorrect or ungrammatical.

As you can see, there’s no rule that says grammar has to come from human speech. If whales are capable of communication, there’s nothing preventing them from developing their own grammar as well.

And consider this: young whales, known as calves, can take at least two years to produce recognisable codas. That’s quite a bit longer than you, the human child, took to start uttering your first words.

To understand the whale’s language, we need to find answer to three questions. What are their basic sounds? Do whales use grammar? And lastly, do these emitted sounds mean something?

To set about answering these questions, researchers are planning to, naturally, use the buzzword of the day: Artificial Intelligence.

The recent successes of machine-learning methods and Natural Language Processing — or NLP — in understanding human communication means that AI is at the forefront of analysing whale sounds. Today’s NLP tools can segment speech into basic sounds and even learn the underlying grammar in sentences. Recent breakthroughs in AI allow researchers to translate between two unknown human languages without needing a mapping between the two languages or a “Rosetta Stone”.

Project CETI will build on these discoveries to provide a dictionary of the whale’s language. Using AI, the team will listen to and translate the communication of these majestic creatures and perhaps even talk back.

Existing machine learning methods are already capable of automatically detecting codas, and classifying codas into clans and individuals. Recent advances in unsupervised learning can help understand whale language, despite having no prior knowledge of where the whale sound begins and ends for it to be called a meaningful unit of communication.

However, a lot more data is needed. A significant reason for the success of human language models is that plenty of human language data is available. Models such as GPT-3, the basis of the famous ChatGPT, were trained on over 10¹⁰ data points — that’s 10 followed by ten zeros.

CETI scientists plan on using multiple technologies available to locate and record groups of sperm whales.

One such technology is buoyed arrays with sensors every several hundred meters from the surface to the depth at which sperm whales hunt, which is approximately 1200 metres.

They also plan to attach recording devices to whales to identify who’s talking to whom. Aquatic drones will allow taking audio and video recordings from multiple animals simultaneously to observe behaviours and communications within a group of whales near the surface. Aerial drones will help monitor whale populations. They also want to take videos of whales’ behaviour.

The next step would be to build the whole pipeline of collecting data, storing data, processing data and performing machine learning algorithms on said data to detect and classify whale codas.

Operation “talk to whales” is in full swing, and sperm whales will no doubt notice the intrusion. One can’t help wonder what they’ll be thinking about all this, and whether we’d be able to ask them about it one day.

Perhaps they’ll coda each other, discussing and pondering about the new devices that humans are sending in, and wondering if, for all the effort spent on searching for extraterrestrial life forms, there was intelligent life waiting for them just a step away from their ocean.